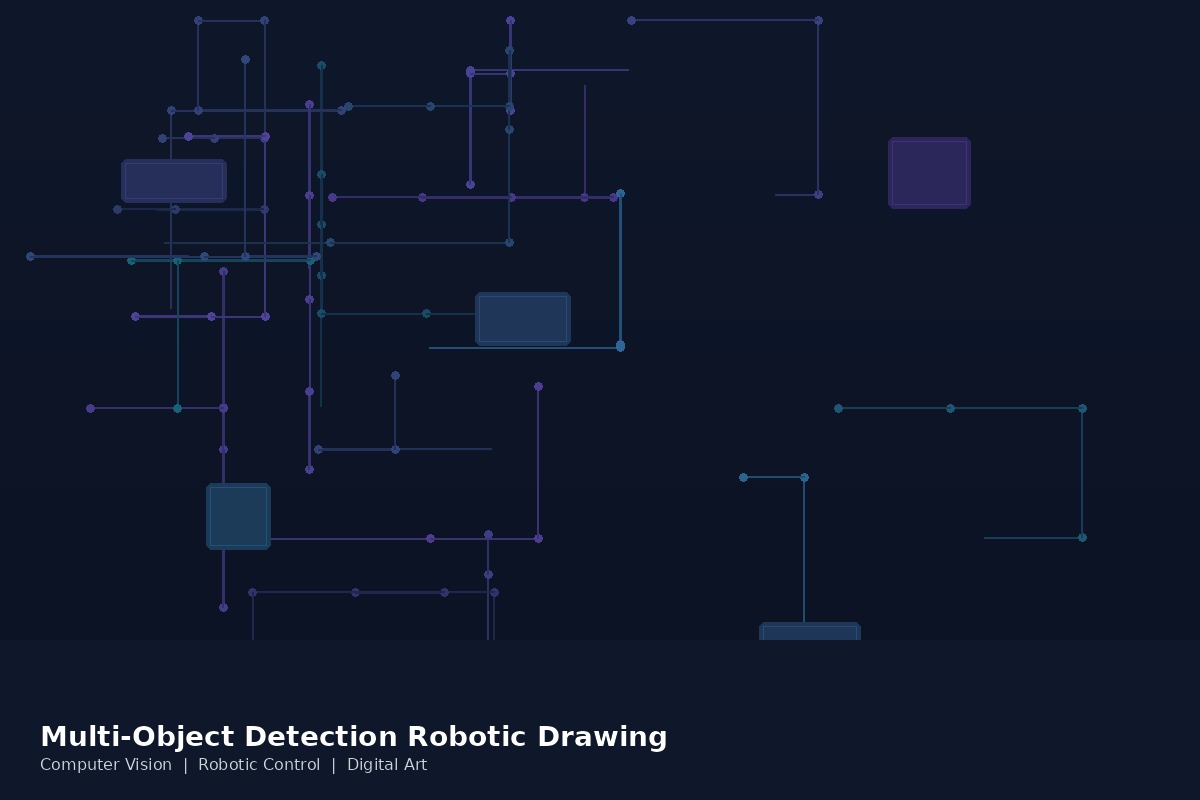

Multi-Object Detection and Robotic Drawing with Multiple Camera Sensors

Developed methods integrating multi-object detection technologies with AI and robotic drawing in digital art

Overview

This project extended our robot drawing research by adding the ability to see the world through multiple cameras and draw what it sees. Rather than working from a pre-loaded image, the system uses multi-camera object detection to identify objects in a real scene, then directs a robotic arm to draw them — creating a robot that observes and sketches its environment in real time.

Technical Approach

The system uses an array of camera sensors to capture a scene from multiple angles, providing depth information and better coverage than a single camera. A multi-object detection model identifies and localizes objects in the scene, then passes this information to the drawing pipeline.

The AI component bridges perception and action: computer vision models detect and classify objects, while the drawing system translates visual information into stroke sequences and robot arm movements. The multi-camera setup also enables the system to handle occlusion and perspective — drawing objects more accurately by combining information from different viewpoints.

What Makes It Different

Most robotic drawing systems work from digital images. This project created a system that perceives a physical environment and interprets it artistically — more like a robot artist sitting at an easel and sketching what it sees in the room.

Applications

The technology has potential applications in interactive art installations (where visitors become subjects for robot portraits), educational demonstrations of AI perception, and research into how machines can develop visual interpretation skills that combine detection with creative expression.

Collaborators

- Cheju Halla University: Lead research institution

- AI and Robotics Research Teams: Technical collaboration